1.Market Breadth is Declining.

SPDR BLOG

When market gains are being driven by a few stocks, it means the market’s breadth—the number of stocks moving higher in tandem—is narrowing. This not only indicates that a rally may be somewhat unsustainable, but it may also indicate a challenging time for active managers. When only a few securities lead the charge, it limits the power of individual stock pickers.

As shown above, the market keeps setting new highs while the number of stocks surging ahead (measured by the number of S&P 500 stocks trading above their 200-day moving average) is falling. This could signal rocky days ahead for a stock market looking to move higher

Source: Bloomberg Finance L.P., as of 5/31/2017.

Past performance is not a guarantee of future results. Index returns are unmanaged and do not reflect the deduction of any fees or expenses.

2.Tech Earnings Growing at Double Digits and NASDAQ Just Broke Out of 20 Year Consolidation.

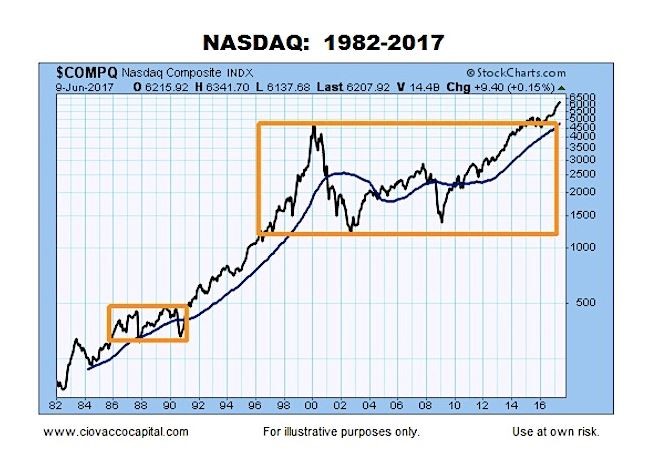

But then check out his so-called view from 60,000 feet — that is, a look at the Nasdaq Composite’s progression since 1982:

While the first chart could be read as techs bubbling over, the long-term view shows “the NASDAQ was able to make it back to the highs made in 2000 and recently broke out of a 20-year period of consolidation,” says Ciovacco. Read the full post here.

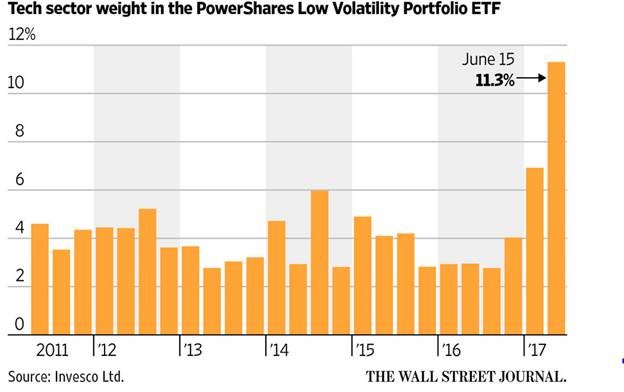

3.Even “Low Volatility” is Long Tech….

Technology now represents 11.3% of the $7 billion PowerShares S&P 500 Low Volatility Portfolio SPLV 0.04% exchange-traded fund, which buys the least-volatile S&P 500 stocks. That compares with 2.9% a year ago, according to Invesco Ltd., the ETF’s sponsor – The development means investors holding a mix of seemingly disparate funds in the name of diversification could be surprised to find heavy concentrations in the same group of in-favor stocks, making them vulnerable in bouts of selling. Rules-based funds and strategies that gradually added tech stocks could sell them in a downturn, adding to price declines

From Dave Lutz at Jones.

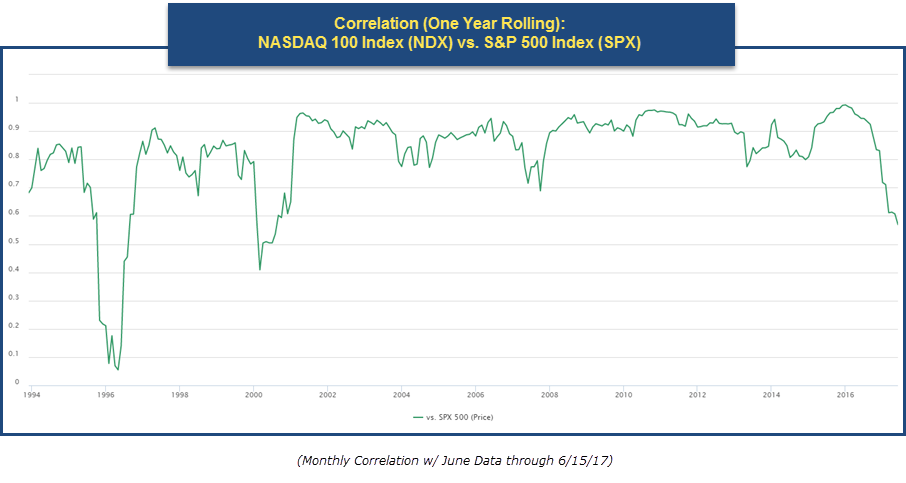

4.Correlation Between NASDAQ 100 and S&P Diverging as Tech Takes Off.

In the graph below, we have plotted the trailing 12-month correlation of the Nasdaq 100 versus the S&P 500. There have been times when the two indexes were highly correlated and times then they were extremely uncorrelated. After a stretch between 2001 and 2016 when the correlation between the two was fairly high (ranging between 0.9 to 1), the relationship between the two indexes has become weaker here recently. Notice how the correlation has just fallen down below 0.60; the lowest reading since right after the Tech Crash in the early 2000’s. The fall in correlation is an indication the Nasdaq 100 and the S&P 500 are beginning to move more independently from each other, which probably doesn’t come as a surprise after the recent trading action that has transpired over the past couple of weeks and the excess returns we have witnessed.

From Dorsey Wright

https://oxlive.dorseywright.com/research/bigwire/2017/06/16/06-16-2017

5.Economic Surprise Index….Complete Divorce from Market Making New Highs??

Bloomberg once again points out the divergence between the stock market and the economic surprise index.

source: Bloomberg

6 .MLPs Tracking Oil Again.

AMLP Sell Off Since February….50day about to cross below 200day to downside.

One Year Tracking Energy SPDR…XLE vs. MLP ETF.

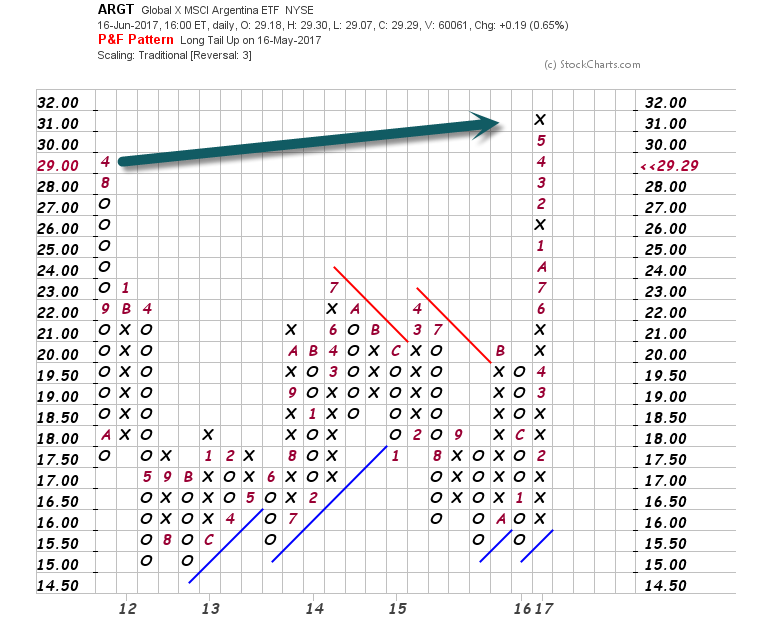

7.Argentina Moving into EEM ETF..Breaks Out to All-Time Highs.

In an indexed world, these adds have a huge effect.

U.S. index provider MSCI is likely to promote Argentina to its $1.5T Emerging Markets Index (ETF: EEM) this week, prompting investment funds from all over the world to buy Argentine equities. The Merval stock index has risen 24% YTD, while the country recently commenced several sovereign bond sales, ending a decade of isolation from international credit.

www.seekingalpha.com

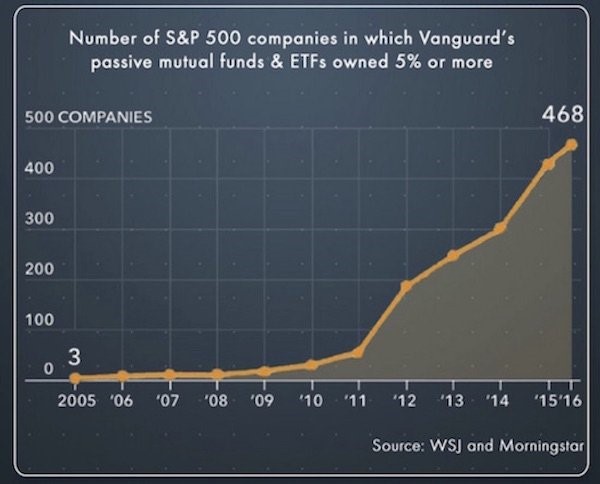

8.Vanguard owns more than 5% of 468 stocks in the S&P 500

http://www.businessinsider.com/why-active-funds-cant-beat-passive-funds-2017-6

http://www.businessinsider.com/why-active-funds-cant-beat-passive-funds-2017-6

9.Read of the Day…50% of Assets Under Management in the U.S. will be Passively Managed by Early 2018. No One Knows What Will Happen at 50% Passive But It Will be the Investing Question of the Century.

Has the meteoric rise of passive investing generated the “greatest bubble ever”?

The better we understand the baked-in biases of algorithmic investing, the closer we can come to answers.

In an article for Bloomberg View last week titled “Why It’s Smart to Worry About ETFs”, Noah Smith wrote the following prescient truth: “No one knows the basic laws that govern asset markets, so there’s a tendency to use new technologies until they fail, then start over.” As we explored in WILTW June 1, 2017, algorithmic accountability has become a rising concern among technologists as we stand at the precipice of the machine-learning age. For more than a decade, blind faith in the impartiality of math has suppressed proper accounting for the inevitable biases and vulnerabilities baked into the algorithms that dominate the Digital Age. In no sector could this faith prove more costly than finance.

The rise of passive investing has been well-reported, yet the statistics remain staggering. According to Bloomberg, Vanguard saw net inflows of $2 billion per day during the first quarter of this year. According to The Wall Street Journal, quantitative hedge funds are now responsible for 27% of all U.S. stock trades by investors, up from 14% in 2013. Based on a recent Bernstein Research prediction, 50% of all assets under management in the U.S. will be passively managed by early 2018.

In these pages, we have time and again expressed concern about the potential distortions passive investing is creating. Today, evidence is everywhere in the U.S. economy — record low volatility despite a news cycle defined by turbulence; a stock market controlled by extreme top-heaviness; and many no-growth companies seeing ever-increasing valuation divergences. As always, the key questions are when will passive strategies backfire, what will prove the trigger, and how can we mitigate the damage to our portfolios? The better we understand the baked-in biases of algorithmic investing, the closer we can come to answers.

Over the last year, few have sounded the passive alarm as loudly as Steven Bregman, co-founder of investment advisor Horizon Kinetics. He believes record ETF inflows have generated “the greatest bubble ever” — “a massive systemic risk to which everyone who believes they are well-diversified in the conventional sense are now exposed.”

Bregman explained his rationale in a speech at a Grant’s conference in October:

“In the past two years, the most outstanding mutual fund and holding- company managers of the past couple of decades, each with different styles, with limited overlap in their portfolios, collectively and simultaneously underperformed the S&P 500…There is no precedent for this. It’s never happened before. It is important to understand why. Is it really because they invested poorly? In other words, were they the anomaly for underperforming — and is it reasonable to believe that they all lost their touch at the same time, they all got stupid together? Or was it the S&P 500 that was the anomaly for outperforming? One part of the answer we know… If active managers behave in a dysfunctional manner, it will eventually be reflected in underperformance relative to their benchmark, and they can be dismissed. If the passive investors behave dysfunction ally, by definition this cannot be reflected in underperformance, since the indices are the benchmark.”

At the heart of passive “dysfunction” are two key algorithmic biases: the marginalization of price discovery and the herd effect. Because shares are not bought individually, ETFs neglect company-by-company due diligence. This is not a problem when active managers can serve as a counterbalance. However, the more capital that floods into ETFs, the less power active managers possess to force algorithmic realignments. In fact, active managers are incentivized to join the herd—they underperform if they challenge ETF movements based on price discovery. This allows the herd to crowd assets and escalate their power without accountability to fundamentals.

With Exxon as his example, Bregman puts the crisis of price discovery in a real- world context:

“Aside from being 25% of the iShares U.S. Energy ETF, 22% of the Vanguard Energy ETF, and so forth, Exxon is simultaneously a Dividend Growth stock and a Deep Value stock. It is in the USA Quality Factor ETF and in the Weak Dollar U.S. Equity ETF. Get this: It’s both a Momentum Tilt stock and a Low Volatility stock. It sounds like a vaudeville act…Say in 2013, on a bench in a train station, you came upon a page torn from an ExxonMobil financial statement that a time traveler from 2016 had inadvertently left behind. There it is before you: detailed, factual knowledge of Exxon’s results three years into the future. You’d know everything except, like a morality fable, the stock price: oil prices down 50%, revenue down 46%, earnings down 75%, the dividend-payout ratio almost 3x earnings. If you shorted, you would have lost money…There is no factor in the algorithm for valuation. No analyst at the ETF organizer—or at the Pension Fund that might be investing—is concerned about it; it’s not in the job description. There is, really, no price discovery. And if there’s no price discovery, is there really a market?”

We see a similar dynamic at play with quants. Competitive advantage comes from finding data points and correlations that give an edge. However, incomplete or esoteric data can mislead algorithms. So the pool of valuable insights is self-limiting. Meaning, the more money quants manage, the more the same inputs and formulas are utilized, crowding certain assets. This dynamic is what caused the “quant meltdown” of 2007. Since, quants have become more sophisticated as they integrate machine learning, yet the risk of overusing algorithmic strategies remains.

Writing about the bubble-threat quants pose, Wolf Street’s Wolf Richter pinpoints the herd problem:

“It seems algos are programmed with a bias to buy. Individual stocks have risen to ludicrous levels that leave rational humans scratching their heads. But since everything always goes up, and even small dips are big buying opportunities for these algos, machine learning teaches algos precisely that, and it becomes a self-propagating machine, until something trips a limit somewhere.”

As Richter suggests, there’s a flip side to the self-propagating coin. If algorithms have a bias to buy, they can also have a bias to sell. As we explored in WILTW February 11, 2016, we are concerned about how passive strategies will react to a severe market shock. If a key sector failure, a geopolitical crisis, or even an unknown, “black box” bias pulls an algorithmic risk trigger, will the herd run all at once? With such a concentrated market, an increasing amount of assets in weak hands have the power to create a devastating “sell” cascade—a risk tech giant stocks demonstrated over the past week.

With leverage on the rise, the potential for a “sell” cascade appears particularly threatening. Quant algorithms are designed to read market tranquility as a buy-sign for risky assets—another bias of concern. Currently, this is pushing leverage higher. As reported by The Financial Times, Morgan Stanley calculates that equity exposure of risk parity funds is now at its highest level since its records began in 1999.

This risk is compounded by the ETF transparency-problem. Because assets are bundled, it may take dangerously long to identify a toxic asset. And once toxicity is identified, the average investor may not be able to differentiate between healthy and infected ETFs. (A similar problem exacerbated market volatility during the subprime mortgage crisis a decade ago.) As Noah Smith writes, this could create a liquidity crisis: “Liquidity in the ETF market might suddenly dry up, as everyone tries to figure out which ETFs have lots of junk and which ones don’t.”

J.P. Morgan estimated this week that passive and quantitative investors now account for 60% of equity assets, which compares to less than 30% a decade ago. Moreover, they estimate that only 10% of trading volumes now originate from fundamental discretionary traders. This unprecedented rate of change no doubt opens the door to unaccountability, miscalculation and in turn, unforeseen consequence. We will continue to track developments closely as we try and pinpoint tipping points and safe havens. As we’ve discussed time and again with algorithms, advancement and transparency are most-often opposing forces. If we don’t pry open the passive black box, we will miss the biases hidden within. And given the power passive strategies have rapidly accrued, perpetuating blind faith could prove devastating.

This article was originally published in “What I Learned This Week” on June 15, 2017. To subscribe to our weekly newsletter, visit 13D.com or find us on Twitter @WhatILearnedTW.

10.This Harvard Survey Reveals What Most People Miss About Leadership

What’s the most important thing a leader can do for the team? Explain to them what the business wants to achieve and why.

A recent survey conducted by The Harvard Business Review reveals that autonomy at work (not micromanagement) is the key to employee satisfaction and organizational achievement.

It describes this concept as “rebelling,” or giving employees the freedom to “deviate from organizational norms, others’ actions, or common expectations, to the benefit of the organization.”

This has been a core value at my company, Wattpad, since we launched a decade ago. We describe this frame of mind as that of a trailblazer. Trailblazers challenge themselves and others to think big and think differently. They are not afraid to lead, or to try something new.

This all sounds good on paper, but structure, vision, and process also need to be in place to run an effective organization. So, the question becomes: How do you create a workplace culture that produces results while providing staff with the freedom to do things their way?

How to lead a trailblazing team: Focus on outcomes.

Autonomy for your employees depends on clearly defining your company vision while grounding every project in logical outcomes. Issues arise when people get caught up in their output (how much work they’re producing) without a defined outcome (what it is they need to produce).

“Am I working on the right thing?” is a hard question to answer without understanding what the outcome should be. Here’s an example:

A CEO asks for a data report on referral traffic from Facebook (output), but what he really wants is information on the top social media sites driving referral traffic to the company website (outcome).

In this case, the CEO will receive what they asked for, but the outcome they truly wanted will be missing. This ambiguity is what leads to missed quotas, internal frustration, and communication breakdowns. By clearly communicating expected outcomes at the beginning of a project, you keep people engaged by rooting their choices in strategic objectives.

Why outcomes empower your team.

When you hire the right people and share the outcomes you wish to achieve and why, you can trust them to figure out the how. Here’s an interesting example from the same HBR article:

The Ritz-Carlton allows staff members to spend up to $2,000 to address any customer complaint in the way they deem best. The hotel believes that a business is most successful when employees have well-defined standards, understand the reasoning behind them, and are given autonomy in carrying them out.

The report states that The Ritz-Carlton depends on 3,000 standards developed over the years to ensure a consistent customer experience at all its hotels. With those many standards in place, it’s easy for an employee to make decisions rooted in a clear outcome — exceptional customer experience.

When you work on or assign a task, clearly define your desired outcome to get the best results from your team. At Wattpad, we depend on seven core values to help root decisions within our strategy. These are the values that make us who we are, and make it enjoyable for us to come to work every day. They are the attributes that we strive to embody and they keep us all on the same page.

Clearly explain your vision and expected outcomes to inspire your staff to achieve great things. With clear outcomes, they’ll gain the confidence to get the right work done and in the process, you’ll achieve and grow together.

The opinions expressed here by Inc.com columnists are their own, not those of Inc.com.

https://www.inc.com/allen-lau/a-harvard-report-on-management-reveals-what-most-leaders-miss.html